Chain-of-Thought Prompting

Elicits Reasoning in Large Language Models

Wei et al. (Google Research, Brain Team) | NeurIPS 2022

💡 Core Idea

Chain of Thought = A series of intermediate reasoning steps that lead to the final answer

Simply provide a few CoT demonstrations as exemplars in few-shot prompting

⚖️ Standard vs Chain-of-Thought Prompting

Standard Prompting

Q: Roger has 5 tennis balls. He buys 2 more cans. Each can has 3 balls. How many now?

A: The answer is 11.

❌ Often Wrong

Chain-of-Thought

A: 2 cans × 3 = 6 balls.

5 + 6 = 11. Answer: 11

✓ Correct!

🔑 Key Properties

1️⃣ Decomposes multi-step problems

2️⃣ Interpretable reasoning window

3️⃣ Applicable to any language task

4️⃣ No finetuning required

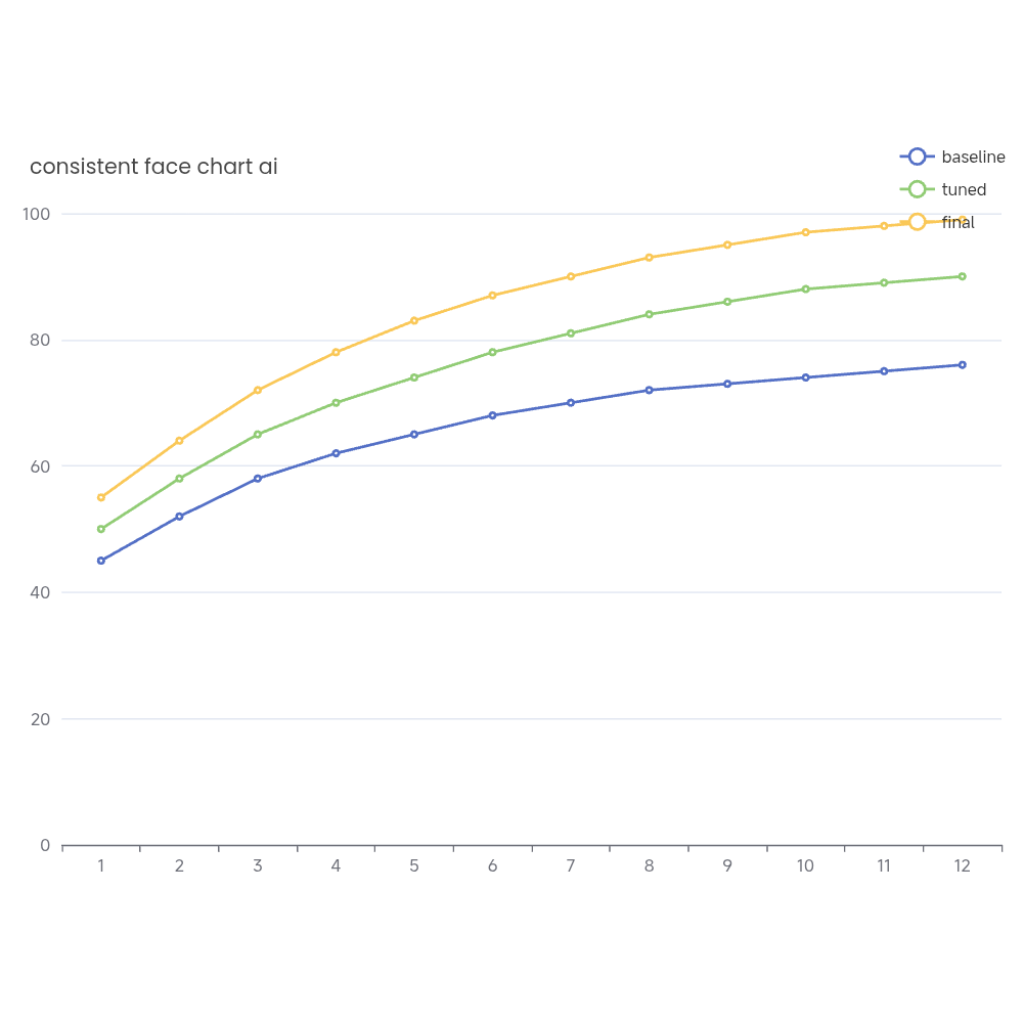

📈 Emergent Ability

CoT only works with

~100B+ parameters

Small models produce

fluent but illogical chains

📊 Key Results

GSM8K (Math)

18%

57%

Standard → CoT (PaLM 540B)

🧪 Benchmarks Tested

🔢 Arithmetic

GSM8K, SVAMP, ASDiv, AQuA, MAWPS

🧠 Commonsense

CSQA, StrategyQA, Date, Sports, SayCan

🔣 Symbolic

Last Letter Concat, Coin Flip

🎯 Task Types & Results

Arithmetic

Reasoning

SOTA on GSM8K

(57% vs 55% prior)

Commonsense

Reasoning

SOTA StrategyQA

(75.6% vs 69.4%)

Symbolic

Reasoning

OOD Generalization

to longer sequences

🤖 Models Tested

• GPT-3 (175B)

• LaMDA (137B)

• PaLM (540B)

• Codex

• UL2 (20B)

No finetuning - prompting only!

✨ Key Takeaways

✓ Simple yet powerful

✓ Emergent at scale

✓ Broadly applicable

✓ No training needed

✓ State-of-the-art results

📝 Prompt Format

〈 Input, Chain of Thought, Output 〉

⚠️ Limitations

• Requires large models (~100B+)

• No guarantee of correct reasoning

• Costly to serve in production

🚀 Impact

Foundational technique for modern LLM reasoning - inspired many follow-up works including Self-Consistency, Tree-of-Thought, etc.